How FireHydrant Creates Data in Rails

How FireHydrant is built to support creating data in our integration-ready platform.

At FireHydrant, we built a platform for integrations from the beginning. This has a lot of implications to the way that data gets created and readout of your application, however. For example, how does a user differ from an integration reading data? Do you make API tokens on users or create a separate model called Bot? (Hint: you do). These questions are something that all engineering teams need to solve if you're building a B2B SaaS product that others can also integrate with. In this blog post, we'll talk about how FireHydrant is built to support creating data in our integration-ready platform.

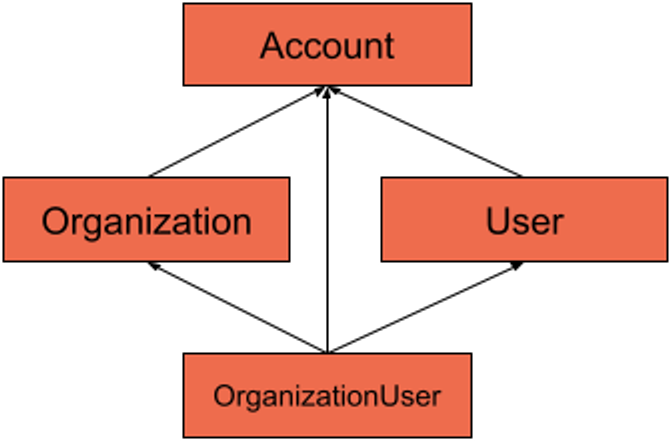

Data Model#data-model

One of the first things that makes a B2B company different when getting started is the initial data model. Traditionally you read tutorials on how to make a user-based data model (users signup and use your services). These lessons change, though, when you introduce the concept of a company signing up for your services and adding users. For example, your company might be using Google for your company email. You as the individual are not paying for it, your company is, so how do you build an application that supports this model?

For FireHydrant, we have 4 models to enable this idea:

- Account

- Organization

- User

- OrganizationUser

In this model, you can see that Account sits at the top and everything has an account_id reference to it (more on this later). Accounts also have many Organizations, and Organization has many users through the OrganizationUser model.

Account is a model that is where all of the high-level detail about a customer exists, remember, our application is for businesses and their users, not individuals only. By moving a model above everything called "Account" we can put billing information, addresses, roles, etc, at the top. In our app, Account is more or less a "god model", but it has no responsibility other than to be a place everything can point to.

The other model you might see here and go "wtf mate?" Is the Organization model. The reason we have this model in our stack is to support very large organizations that have several divisions that have hundreds of users in each. If you think about a company like Microsoft, you might have an Organization for Azure, and another for Office365, but the account is still just "Microsoft."

Our models are represented like so:

class Account < ActiveRecord::Base

has_many :users

has_many :organizations

validates :name, presence: true

end

class Organization < ApplicationRecord

has_many :organization_users, dependent: :destroy

has_many :users, through: :organization_users, dependent: :destroy

end

class OrganizationUser < ApplicationRecord

belongs_to :user

belongs_to :organization

end

class User < ApplicationRecord

has_many :organization_users, dependent: :destroy

has_many :organizations, through: :organization_users

endThe astute reader might have noticed that every model here inherits from the ApplicationRecord class except for the Account model.

The reason for this is because we have a non-nullable account_id on every model in our stack (minus the Account model). We then have this ApplicationRecord class definition:

class ApplicationRecord < ActiveRecord::Base

self.abstract_class = true

belongs_to :account

endThis forces all of our models to have an association to the account record that owns that piece of data in our database.

Now that we have a model that allows us to create data in a tenanted fashion, let's get on to the juicy bits.

Creating Data#creating-data

We're an incident response tool, so naturally, we have a model in our Rails application called Incident, but incidents can be created by people, bots, and integrations. In most Rails applications where data is created by a user, you'll typically see a belongs_to :user line in the model. This is so you can see who authored (or owns) that record. For us, though, this doesn't work when you have multiple types of actors that can create data.

To solve this, we have an object pattern called Creators in our application. The idea of a creator is that it handles all of the logic necessary to create data. Rails typically recommends having a controller action create data with your model class directly, for us, though, this simply isn't practical for how many different ways data can be created.

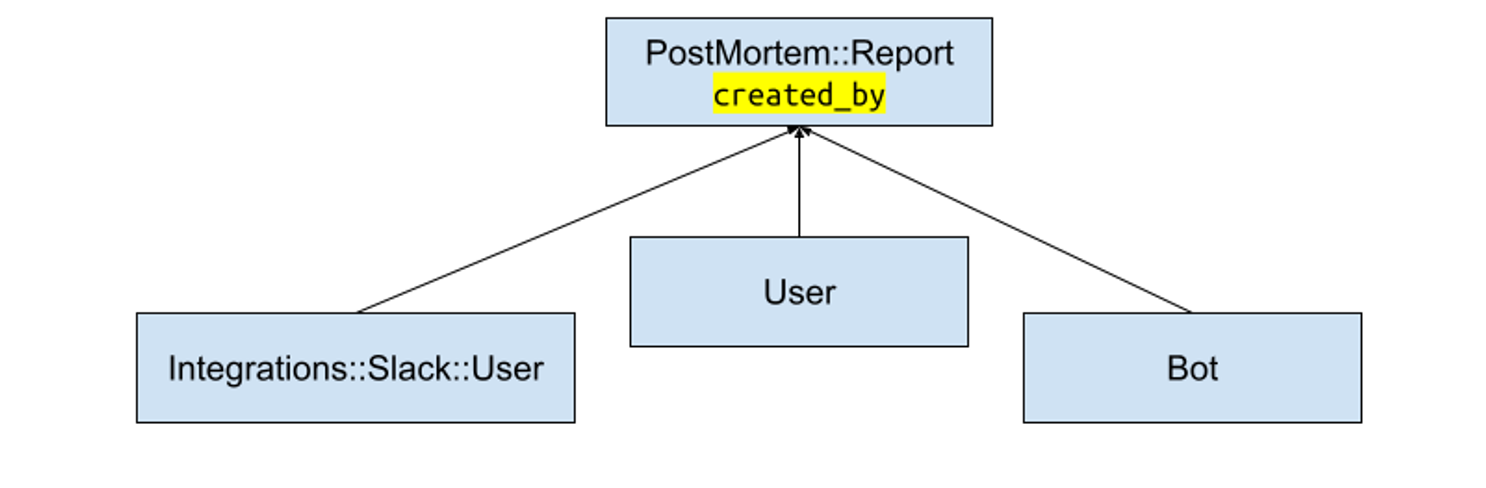

For example, our PostMortem::Report model has this definition:

class PostMortems::Report < ApplicationRecord

validates :name, presence: true

belongs_to :created_by, polymorphic: true

belongs_to :incident

endNotice how our created_by association is marked as polymorphic. This means we can associate any model on our PostMortem::Report as the actor that created it. This was is a weird thing to start doing but has made our application so flexible for integrations I wouldn't do it any other way now.

But creating these objects can be weird, since an API endpoint, controller action, or even just a Sidekiq job needs a lot more context now. This is where our creator pattern emerged.

An example of one of our production creator objects is for postmortem reports:

creator = ::PostMortems::ReportCreator.new(

incident,

actor: current_user,

account: current_account

)

result = creator.create(params)All creators have the first parameter of "scope." Since everything after an account belongs to something, it makes sense to have your initializer accept the thing that data will ultimately belong to when saved.

Secondly, we have 2 keyword arguments for the actor creating the data, and the account that will own it. An actor could be a User, Bot, or even Integrations::Slack::Connection object. Since the created_by field is polymorphic, we can accept any model type in this field.

Then our creator object has a #perform method that is called by our #create method you see in the example above. It looks like this:

class PostMortems::ReportCreator < ApplicationCreator

private

alias incident scope

delegate :organization, to: :incident

def perform(params = {})

allowed = AllowedParams.new(params)

report = PostMortems::Report.new(allowed.to_h)

result = WriterResult.new(report)

PostMortems::Report.transaction do

report.created_by = actor

report.incident = incident

report.account = account

unless report.save

result.append_errors(report)

raise ActiveRecord::Rollback

end

end

end

class AllowedParams < WriterParams

property :name

property :summary

property :tag_list

end

endFrom the top, you can see we inherit from our ApplicationCreator class. This is where #create is defined which delegates to the #perform method in this object, but wraps it logs and metrics (another huge advantage to this we'll talk about later).

You can see we have another object called AllowedParams. Rails provides StrongParams, but what if the params didn't come from a controller action... should we forgo all parameter sanitization because of that? Of course not! So we allow parameters in from anywhere but only pass the ones we care about to our model using this class definition.

Next, we assign the created by field, incident, and our account. From there, we attempt to save the object, and if it fails we take the errors from the report model object and append them to our WriterResult object. WriterResult is pretty simple:

class WriterResult < Struct.new(:object)

def success?

errors.empty?

end

alias successful? success?

def errors?

errors.any?

end

def append_errors(object)

errors.merge!(object.errors)

end

def errors

@errors ||= ActiveModel::Errors.new(self)

end

endThis object makes returning a result from a #create call easy, and allows us to return the object created or errors. ActiveRecord::Errors has a mutating method merge! that will merge other instances of the class into itself. This allows us to merge in errors from other objects into the result object.

Folder Structure#folder-structure

Because Rails adds all folders in the `app/` directory to the auto load path, we have 3 folders for our object mutation classes:

app/creators

app/updaters

app/destroyersSo our postmortem creator class lives at:

app/creators/post_mortems/report_creator.rbUsage#usage

You can see how we use it in our PostMortems API endpoint:

post do

incident = current_organization.incidents.find(params[:incident_id])

creator = ::PostMortems::ReportCreator.new(incident, **actor_and_account)

result = creator.create(params)

if result.success?

present result.object, with: PublicAPI::V1::PostMortems::ReportEntity

else

status 400

present PublicAPI::V1::Error.new(detail: "could not create report", messages: result.errors.full_messages), with: PublicAPI::V1::ErrorEntity

end

endPretty simple, right? Any other entry points for creating a PostMortem::Report object are exactly the same too, creating consistency throughout the entire application.

There are other advantages of using this pattern as well. For example, permissions. Permissions can now live in our creators / updaters objects. And because we're returning a WriterResult object, we can append any errors about permissions to that result object instead.

Telemetry#telemetry

Another huge advantage of using a single object for creating any data in your application is telemetry. Logs and metrics are not as readily available for normal model creates if you're doing controller -> model. With our creator objects, however, we can easily log and emit metrics for everything with ease.

Here is a stripped version of our ApplicationCreator class:

class ApplicationCreator

def initialize(scope, actor:, account:)

@scope = scope

@actor = actor

@account = account

end

'# Perform calls the private #create method all creators must implement

'# #create must return a WriterResult scope

def create(params = {})

perform(params)

end

private

attr_reader :scope, :actor, :account

endNotice how our create method here just delegates to the #perform method on the class. Because we inherit all of our creator objects from it, we have the ability to wrap the logic with telemetry:

def create(params = {})

Instrumentation.counter(:create, { creator: self.class.name }, 1) do

perform(params)

end

endOur instrumentation in our Rails application allows us to tag metrics in prometheus. In this instance we are counting and timing the create call for our creator class, making it easy to see how long our create calls are really taking.

Logs become extremely simple now as well:

def create(params = {})

Instrumentation.counter(:create, { creator: self.class.name }, 1) do

perform(params)

end

Rails.logger.info({event: "create", creator: self.class.name, account_id: account.id, actor_type: actor.class.name, actor_id: actor.class.id })

endHaving logs for when creators are called incredibly valuable, and we also have the ability to see the account and actor that performed the action because we enforce that all creates include that information.

Using Google PubSub in Creators#using-google-pubsub-in-creators

Our application makes use of Google’s PubSub product to notify other parts of our application of an event occurring. For example, when a note is added to an incident timeline, we push a message to PubSub after the note is created in our creator object. This means that anytime we create a note (via the API, UI, or Slack integration), a message is published the same exact way and all of the other systems can react accordingly.

class Incidents::NoteCreator < ApplicationCreator

private

alias incident scope

def perform(params)

allowed = AllowedParams.new(params)

note = Event::Note.new(allowed.to_h)

note.account = account

result = WriterResult.new(note)

ActiveRecord::Base.transaction do

incident_event = IncidentEvent.new(event: note, created_by: actor, incident: incident, account: account)

if params[:visibility].present?

incident_event.visibility = params[:visibility]

end

unless note.save && incident_event.save

result.append_errors(note)

result.append_errors(incident_event)

return result

end

publisher = Incidents::EventPublisher.new(incident)

publisher.publish(incident_event, 'created')

end

result

end

class AllowedParams < WriterParams

property :body

end

private_constant :AllowedParams

endThis creator does quite a few things for us:

- Creates a note record

- Ties that record to an incidents timeline (That’s our

IncidentEventmodel) - Publish our incident event to PubSub

This object has a ton of specs to ensure all of these cases work as designed, it is complex, but it’s as complex as it needs to be for our uses. Because of this object though, adding a note to an incident via our Slack integration is extremely simple:

class Integrations::Slack::Commands::NewNote < Integrations::Slack::Commands::BaseCommand

include Integrations::Slack::Commands::LinkedAccountCheck

include Integrations::Slack::Commands::ActiveChannelCheck

def to_channel_response

creator = Incidents::NoteCreator.new(current_incident, actor: authed_provider.user, account: account)

result = creator.create(body: parsed.string_args)

errors.merge!(result.errors)

nil

end

private

def parsed

@parsed ||= intent.parsed_original_text

end

endWe’ll leave some parts of this to the imagination. The key point is that it's only a few lines of code to create a note, and it's also the same exact lines (give or take a few parameter changes) in our API too.

Breakdown#breakdown

After building several Rails applications that have to provide public APIs and are B2B products, I think this is the right way forward for us. We've been using this pattern in production for months and haven't seen many cracks with it just yet.

We're betting on our future with this pattern too. It is significantly more difficult to go back to every entry point of data creation/mutation to add logs, telemetry, queue publishing, etc after a few years of operation. Depending on the size of your application, you could be looking at years of dedicated work to accomplish these things.

I hope this was helpful, if you have any questions about the stack, our decisions, or if you want to tell me this is a horrible idea, my Twitter handle is @bobbytables.

You just got paged. Now what?#you-just-got-paged-now-what

FireHydrant helps every team master incident response with straightforward processes that build trust and make communication easy.